from torch import nn class SELayer(nn.Module): def __init__(self, channel, reduction=16): super(SELayer, self).__init__() //返回1X1大小的特徵圖,通道數不變 self.avg_pool = nn.AdaptiveAvgPool2d(1) self.fc = nn.Sequential( nn.Linear(channel, channel // reduction, bias=False), nn.ReLU(inplace=True), nn.Linear(channel // reduction, channel, bias=False), nn.Sigmoid() ) def forward(self, x): b, c, _, _ = x.size() //全局平均池化,batch和channel和原來一樣保持不變 y = self.avg_pool(x).view(b, c) //全連接層+池化 y = self.fc(y).view(b, c, 1, 1) //和原特徵圖相乘 return x * y.expand_as(x)

補充知識:pytorch 實現 SE Block

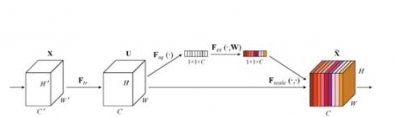

論文模塊圖

代碼

import torch.nn as nn class SE_Block(nn.Module): def __init__(self, ch_in, reduction=16): super(SE_Block, self).__init__() self.avg_pool = nn.AdaptiveAvgPool2d(1) # 全局自適應池化 self.fc = nn.Sequential( nn.Linear(ch_in, ch_in // reduction, bias=False), nn.ReLU(inplace=True), nn.Linear(ch_in // reduction, ch_in, bias=False), nn.Sigmoid() ) def forward(self, x): b, c, _, _ = x.size() y = self.avg_pool(x).view(b, c) y = self.fc(y).view(b, c, 1, 1) return x * y.expand_as(x)

現在還有許多關於SE的變形,但大都大同小異

[kyec555 ] pytorch SENet實現案例已經有237次圍觀